by Hyunjin Kim*

The US Department of Justice is suing Google for using distribution agreements and other practices that the law enforcement authority says give the company an unfair advantage in internet search and search advertising. Google argues that its product is simply better. “People use Google because they choose to – not because they’re forced to or because they can’t find alternatives,” it said in a statement.

Coincidentally, in research unconnected with the DOJ case, Michael Luca of Harvard Business School and I examined Google’s claim to superior quality in one aspect of internet search: online reviews. Our findings, published in Management Science, suggest that consumers prefer search results that give them alternatives. In fact, participants in our experiment appeared to perceive Google reviews to be of lower quality that those of its competitors.

Tying strategy

We set out to explore concerns that when platform companies “tie” products to their dominant businesses as they enter adjacent markets, they may be suppressing competition and consumer interests. Few Microsoft Windows users would have been able to avoid Internet Explorer, nor Apple iPhone owners Apple Maps. By presenting its own product as the default or making it more difficult for consumers to reach alternatives, a platform company may be exploiting human inertia to channel demand to itself.

This would not be much of an issue if their products were simply better. After all, quality – as well as choice – is the whole point of competition. Besides, by entering adjacent markets, platform companies increase competition in that market. Such is the argument of many dominant platforms, and it may well be true in some cases.

However, this claim should be evaluated product by product. Just because a company enters a new market successfully doesn’t mean its offering is superior to incumbents’. And even though an inferior product may turn off some people, a company could still profit from keeping users on its pages while crippling rivals.

In search of the best pizza

To assess whether a tying strategy helps companies enter new markets even when they have products of lower quality than existing ones, we investigated Google’s decision to put its online reviews front and centre in its dominant search engine when it entered the reviews market in 2010. It did so by developing a “OneBox” that sat on top of any organic results and excluded competitor reviews.

We ran an online experiment using UsabilityHub, a platform that enables companies to measure the effectiveness of webpage design and which has been used by many tech companies including Google, Amazon and eBay for product development. We recruited around 15,000 participants and selected the 100 largest US cities by population. Participants were asked to imagine that they had just used Google to look for a pizza restaurant in one of the cities.

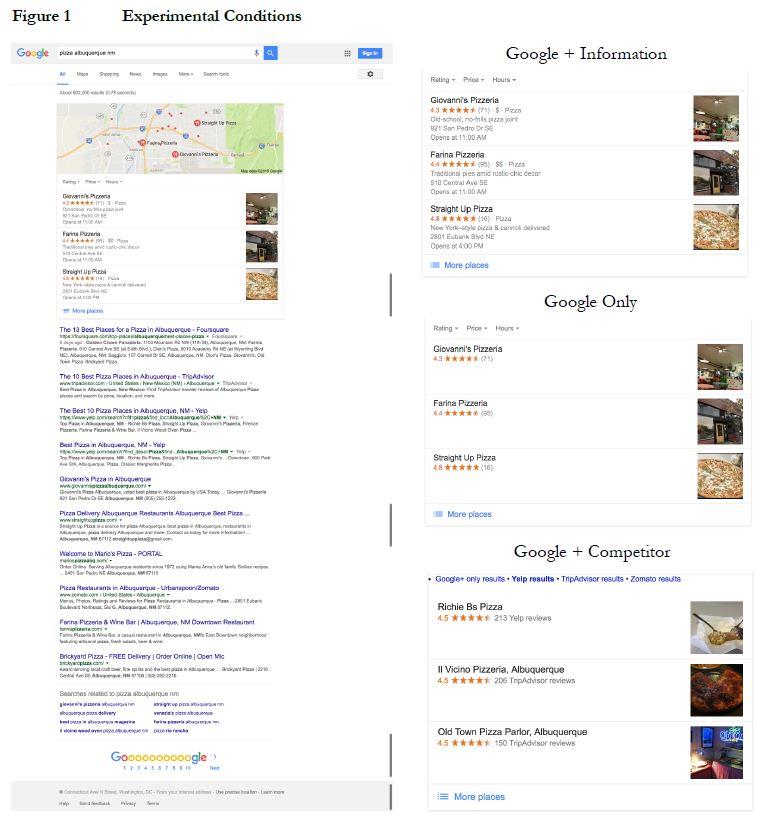

Each participant was randomly assigned to view one of three versions of the Google OneBox: one that showed only Google reviews (“Google Only”, as Google ultimately chose to show); one that included competitor reviews determined to be the best by Google’s own organic search algorithm (“Google + Competitor”); and Google’s actual search results at the time of the experiment that displayed information snippets such as restaurant hours and address (“Google + Information”).

Other than the review information presented in the OneBox, all three conditions provided identical screenshots of search results. We recorded where on the screen participants clicked as a measure of which results they preferred: on the OneBox, on any of the organic search results displayed below the OneBox, or elsewhere on the screen.

We found that Google’s tying strategy of showing only Google reviews significantly reduced users’ probability of clicking on the OneBox by 5 percent, compared to showing competitor reviews. The competitor reviews in fact showed on average three times the total number of reviews than Google-only results. This implies that Google discarded about two-thirds of the reviews in the process of excluding competitors. We also found that users respond to genuine product improvements, such as adding restaurant information to the reviews.

Even if Google had fewer reviews for this search relative to their competitors, could participants nonetheless perceive Google reviews to be of better quality? To find out, we ran an additional experiment where participants were shown one of two versions of search results with the same number of reviews, which only differed in whether they were labelled as Yelp and Tripadvisor reviews, or as Google reviews.

We found that branding Yelp and Tripadvisor reviews as Google’s reduced clicks on OneBox by 20 percent, suggesting that consumers may prefer content from multiple sources compared to Google alone even when holding the number of reviews constant.

Knowledge is power

Taken together, our experiments suggest that Google provided fewer and lower-quality reviews compared to its competitors. Yet in 2011, only a year after the tech giant entered the online reviews market, it had amassed 3 million reviews – 20 percent that of then-market leader Yelp, which had a six-year head start. Google also directed fewer users to Yelp: In 2012, 85 percent of Yelp user traffic came from Google; by mid-2016, that figure had fallen to 68 percent, even though Google’s overall share of the internet search market held stable at around 65 percent.

While the outcome of the Google antitrust lawsuit is far from certain, our paper provides experimental evidence suggesting that some practices of platform businesses may undercut competition and stifle overall market growth. Consumers, in turn, may end up with fewer choices and lower-quality products.

We also demonstrate how scholars and policymakers could empirically test suspected anti-competition practices without the explicit cooperation of the company in question. Corporate managers could also use this method to understand better how said practices might affect their businesses – as well as ways to rise above the challenge.

*Assistant Professor of Strategy at INSEAD

**first published in: knowledge.insead.edu

By: N. Peter Kramer

By: N. Peter Kramer