by Sarah Bressan*

This blog is part of EU-LISTCO, an innovative and timely project that investigates the challenges facing Europe’s foreign policy. A consortium of fourteen leading research institutions and universities aims to identify risks connected to areas of limited statehood and contested orders—and the EU’s ability to respond.

In April 2019, after at least 207 people were killed by terrorist bombings in Sri Lanka, the government blocked access to social media.

The reason was that disinformation—lies and fabricated news deliberately spread to cause harm—were said to have ignited violence against Muslims, collectively punishing them for the brutal attacks.

The disinformation was only part of the picture, but the story showed the role it can play in escalating violence after a first spark.

Around the world, online disinformation has affected the outcome of elections, heightened social tensions, and led to the denial of scientific facts about immunization or climate change.

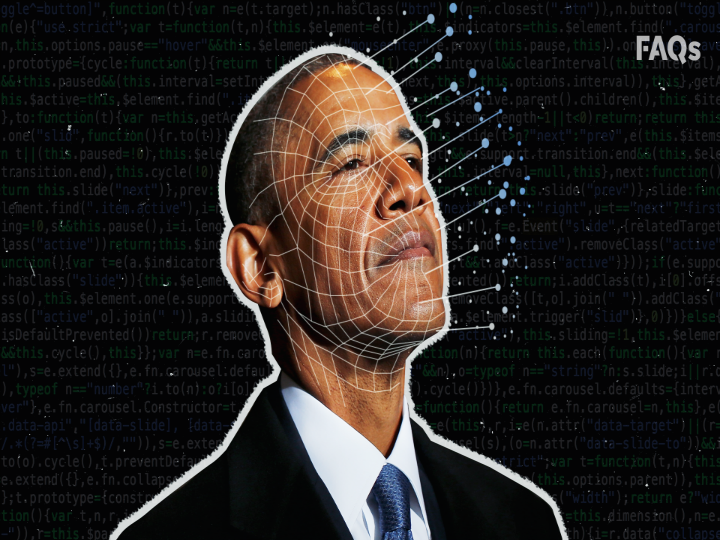

Deepfakes, which are manipulated or fabricated but highly realistic video or audio clips, have the potential to take the problem to another level: experts are already warning that deepfakes could threaten national security, democracy, and the world order.

Compared to text, fake recordings showing outrageous, threatening, or shocking behavior are more effective in triggering emotions like fear, anger, or hatred. Similar to the spread of false information on social media, which is hard to control and even harder to debunk once it takes root, deepfakes can be potent tools of propaganda.

But despite the fact that apps such as FaceApp or Zao have already made deepfake technologies widespread and readily available on almost every smartphone, the EU fails to address the problem.

In its action plan against disinformation, it acknowledges that “disinformation is a powerful and inexpensive—and often economically profitable—tool of influence,” but falls short on the actual action to prevent potential harm.

Its only notable effort, the EUvsDisinfo project against Russian propaganda, has been widely criticized for being understaffed and not up to the challenge.

Strategic foresight and scenario methods offer a way to better understand and prepare for the potential impact of new technologies like deepfakes.

In a recent foresight exercise on out-of-control technologies, selected experts identified overlooked or underrated technologies that could increase the risk of conflict and governance breakdown in Europe’s neighborhood.

In the scenarios they developed, deepfakes clearly stood out: A fake video showing an opposition candidate accepting a briefcase full of cash from an organized crime figure, in exchange for a promise to not prosecute his business interests, gives rise to violence. Fake videos of men wearing Christian symbols abducting Muslim women to sell as sex slaves spark violence between religious groups. Hackers feeding deepfakes into a government surveillance system leads to a series of false convictions.

These scenarios help to better understand how the targeted use of deepfakes by the wrong people at the right time can contribute to violence.

Well-known risk factors for violence include group-based marginalization, polarization, and a history of conflict, and under such conditions, and in volatile situations like in the run-up to contested elections, uncontrolled deepfakes are particularly dangerous: they can erode the trust between communities with a recent memory of violence and the trust of citizens in political elites by reducing the perceived legitimacy of the government.

The most robust policy response to emerge from the foresight exercise were long-term investments into structural prevention against deepfakes. These include investments in education, in technological skills, in a free and high-quality media landscape, and in social trust between communities—all important aspects of social cohesion and societal resilience against violent conflict.

The good news is that the EU’s foreign policy is already based on conflict prevention through resilience-building, understood as the capacity to undergo adaptation and transformation in the face of change.

But such conflict prevention can only be successful in practice if sustained over a long period of time, and it’s currently difficult to get the necessary political and financial support.

What’s more, media platforms and the technologies to spread disinformation are playing fields of geopolitical rivalry. So the EU has to be realistic about the influence its money and diplomacy can have in these areas.

The deepfake scenarios also show what will be necessary if long-term prevention fails. The more out of control a technology gets, and the lower societal resilience is against its effects, the greater the need will be for targeted solutions to prevent conflict.

Of such targeted solutions, the experts in the foresight exercise discussed banning, criminalizing, or controlling the creation and spread of deepfakes.

They suggested that governments invest in research and verification tools to win the arms race between “detection and generation” or hold social media companies accountable to stop deepfakes from spreading.

But such targeted measures also come with tradeoffs and unintended side effects.

Government-run verification tools would be successful in situations where trust in government authorities is high. But they would probably not work in places or situations in which this very trust is being undermined by disinformation.

Holding social media companies accountable could work when business, government, and societal interests are aligned and verification is reliable. However, it would be impossible or outright dangerous in scenarios where corrupt elites control both government and businesses, or when social media companies simply do not care or cannot access end-to-end encrypted communication.

In the wider foreign policy community, targeted solutions go as far as to suggest that politicians and other public figures use “life-logging equipment . . . and authenticated storage services, similar to body cameras for police officers” to always have a credible alibi as a defense against deepfakes.

But even in an ideal world in which such services were provided by reliable companies, sensitive to their users’ privacy concerns and accountable for respective breaches, most people would surely like to avoid recording around-the-clock footage of their private lives.

If what awaits is anything like the scenarios described above, there’s little time to lose. The EU’s peace project could be at stake.

Every effort to build resilience and trust ahead of time would save the EU many of the troubles that would undoubtedly come with technical solutions to such a political problem.

*a research associate at the Global Public Policy Institute (GPPi) in Berlin

**first published in: carnegieeurope.eu

By: N. Peter Kramer

By: N. Peter Kramer